Impact Report

2025

Contents

- A Message from LAS Leadership

- Our Mission

- LAS By the Numbers

- Scholarly Publications

- Six Times Faster Transcription Speeds with TensorRT-LLM

- Driving Detection: Enhancing Military Vehicle Recognition with Synthetic Data

- Advancing Video Triage Workflows: A Year of Innovation

- NC State Designers, LAS Enhance Confidence Score Visualizations

- Boosting Analyst Efficiency with AI-Powered Summaries

- National Security Policy Incorporates LAS Work

- AI Benchmarking

- Advancing AI Security Through Scalable Model Scanning and Behavioral Analysis

- Summer Conference on Applied Data Science

- Industry Spotlight: Rockfish Research

- View the PDF Flipbook

A Message from LAS Leadership

This past year has seen LAS renew its focus on ensuring that its efforts have the potential to impact stakeholder challenges.

While doing so, LAS has remained committed to leveraging its unique collaboration model, where academic and industry experts work closely with government researchers and analysts in an environment that spans classified and unclassified domains.

The benefits of this approach can be seen in this impact report. Analytic approaches first demonstrated as part of unclassified LAS projects have matured into operational tools for audio and video analysis. Design concepts developed in academic design studios are now available to users as operational prototypes. And LAS’s unclassified infrastructure has become a key enabler of model benchmarking and scanning initiatives.

NC State remains committed to supporting the unique, cross-sector, collaborative ecosystem at LAS, which allows the government to engage the broader analytic community in the development of innovative, mission-relevant solutions. By continuing to facilitate agile collaborations, drive efforts towards customer-centric outcomes, and support the transition of project outcomes, NC State will be a trusted partner in LAS’s efforts to drive innovation and impact for our stakeholders.

Matthew Schmidt, Ph.D., Principal Investigator, LAS

As LAS concludes its 2025 efforts, we reflect on a year of extraordinary achievement. LAS has proven that rapid innovation isn’t just possible in the intelligence community (IC)—it’s essential.

By bridging intelligence needs with cutting-edge technological solutions, LAS has delivered tangible results that matter: novel capabilities, accelerated development timelines, and mission-critical solutions when they are needed most.

LAS hasn’t just delivered results in 2025, it has established a sustainable model for continued innovation. By proving that the IC can harness emerging technology at mission-speed, LAS has charted a path forward to tackle the complex challenges that lie ahead. As we look to the future, LAS stands ready to continue driving innovation and impact for the National Security Agency and IC.

Many thanks to all our stakeholders, partners, collaborators, and customers! Our success belongs to a diverse ecosystem of contributors, across the agency and IC. Through your engagement, feedback, and partnership, you have helped shape the lab’s innovation model that truly serves mission needs. This collaborative spirit has been the foundation of every breakthrough, every capability deployed, and every problem solved.

With a solid foundation of proven methodologies, robust partnerships, and clear mission alignment, LAS is well-equipped to continue delivering critical capabilities precisely when the nation needs them most.

Sheila Bent, Acting Director, LAS

Our Mission

The Laboratory for Analytic Sciences is a partnership between the intelligence community and North Carolina State University that develops innovative technology and tradecraft to help solve mission-relevant problems. Founded in 2013 by the National Security Agency and NC State, each year LAS brings together collaborators from three sectors – industry, academia and government – to conduct research that has a direct impact on national security.

LAS By the Numbers

Our real-world impact is improving intelligence analysis through collaboration.

33 Software Tools

11 Models

9 Datasets

5 Prototypes

36 Technical Reports

20 Research Manuscripts

19 Project Write-ups and Presentations

275 Event Attendees

85 Students

25 Faculty Partners

6 Industry Partners

Research Overview

A new blueprint for intelligence innovation

The LAS approach is straightforward yet transformative: connect the nation’s experts with state-of-the-art artificial intelligence (AI) and machine learning (ML) capabilities; create an environment where innovation can move at mission speed; and focus relentlessly on real operational needs. This formula has enabled the NSA to tackle national security challenges with unprecedented agility, setting a new benchmark for how the intelligence community can harness emerging technology.

In 2025, LAS research focused on three core areas:

Sensemaking

Sensemaking projects aim to improve analysts’ ability to efficiently and effectively process, understand, and extract value from ever-increasing amounts of multimodal data, like audio, video, images, and text.

Human-Centered AI

HCAI projects examine how to best integrate automation into workflows while keeping the focus on the analyst.

Operationalizing AI/ML

OAIML projects address how AI and ML techniques can be most beneficial under operational constraints like financial, time, or cognitive resources.

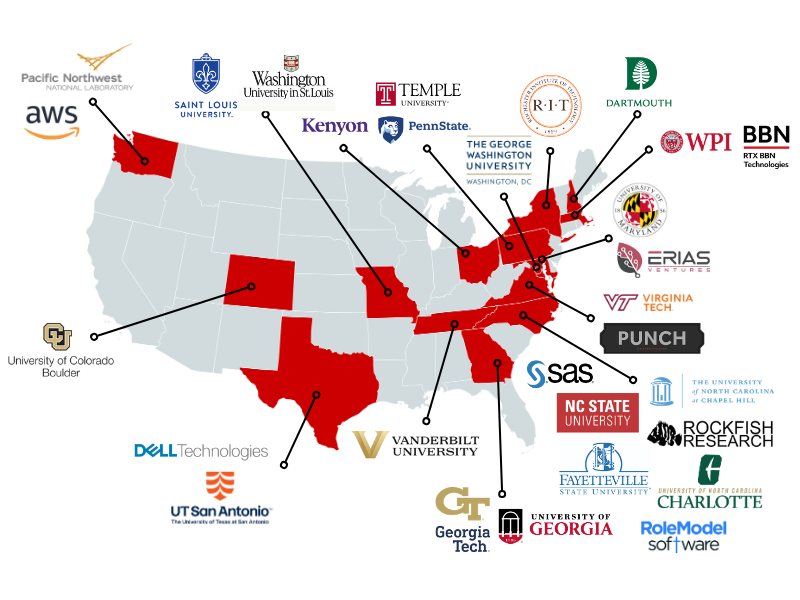

Partnerships

Meet the 2025 Collaborators

Scholarly Publications

We’re proud to share the latest research articles published by our collaborators.

- Armstrong, Helen, Ashley L. Anderson, Rebecca Planchart, Kweku Baidoo, and Matthew Peterson. “Addressing Uncertainty in LLM Outputs for Trust Calibration Through Visualization and User Interface Design.” VISIBLE LANGUAGE 59, no. 2 (2025): 176-217.

- Hannan, Tanveer, Md Mohaiminul Islam, Jindong Gu, Thomas Seidl, and Gedas Bertasius. “ReVisionLLM: Recursive vision-language model for temporal grounding in hour-long videos.” In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 19012-19022. 2025.

- Islam, Md Mohaiminul, Tushar Nagarajan, Huiyu Wang, Gedas Bertasius, and Lorenzo Torresani. “BIMBA: Selective-scan compression for long-range video question answering.” In Proceedings of the Computer Vision and Pattern Recognition Conference, pp. 29096-29107. 2025.

- Kolouju, Pranavi, Eric Xing, Robert Pless, Nathan Jacobs, and Abby Stylianou. “good4cir: Generating detailed synthetic captions for composed image retrieval.” In Proceedings of the Computer Vision and Pattern Recognition Conference, pp. 3148-3157. 2025.

- Pan, Yulu, Ce Zhang, and Gedas Bertasius. “BASKET: A large-scale video dataset for fine-grained skill estimation.” InProceedings of the Computer Vision and Pattern Recognition Conference, pp. 28952-28962. 2025.

- Van Gelder, Tim, Morgan Saletta, Richard De Rozario, Ashley Barnett, Tim Dwyer, Kadek Satriadi, and Christine Shahan Brugh. “Storytelling in intelligence: Theoretical foundations.”International Journal of Intelligence and CounterIntelligence 38, no. 4 (2025): 1298-1330.

- Wang, Ziyang, Shoubin Yu, Elias Stengel-Eskin, Jaehong Yoon, Feng Cheng, Gedas Bertasius, and Mohit Bansal. “VIDEOTREE: Adaptive tree-based video representation for LLM reasoning on long videos.” InProceedings of the Computer Vision and Pattern Recognition Conference, pp. 3272-3283. 2025.

- Wang, Ziyang, Jaehong Yoon, Shoubin Yu, Md Mohaiminul Islam, Gedas Bertasius, and Mohit Bansal. “Video-RTS: Rethinking reinforcement learning and test-time scaling for efficient and enhanced video reasoning.” In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, pp. 28114-28128. 2025.

- Xing, Eric, Pranavi Kolouju, Robert Pless, Abby Stylianou, and Nathan Jacobs. “ConText-CIR: Learning from concepts in text for composed image retrieval.” In Proceedings of the Computer Vision and Pattern Recognition Conference, pp. 19638-19648. 2025.

Audio Sensemaking

Six Times Faster Transcription Speeds with TensorRT-LLM

Accurate transcripts of recorded material are crucial to the intelligence community, so LAS has been implementing AI tools that improve transcript production speed.

OpenAI’s Whisper is an automatic speech recognition model that uses neural networks to achieve extremely low transcript errors. Like other neural networks, running Whisper requires a graphics processing unit (GPU) and is computationally expensive. By using two NVIDIA tools, TensorRT-LLM and Triton Inference Server, LAS improved Whisper’s runtime performance, cutting computation time by more than 80%.

Whisper is like a recipe, with several sequential steps. For each step, the computer has to tell the GPU to “launch a kernel.” The GPU launches the kernel, performs the step, and returns the result to the computer. This constant communication slows everything down. To solve this, LAS developers used TensorRT-LLM to create a CUDA graph, which is a list of instructions for the GPU to follow that merges many of the sequential steps into a single instruction/kernel. These are specific to both the Whisper model being run and the hardware it is being run on. The computer can tell the GPU to run the CUDA graph, substantially reducing back-and-forth communication overhead and eliminating the need for the GPU to send intermediate data back to the computer.

Whisper generates transcripts through a two-step process: it first encodes the audio signal into a data representation and then decodes that information to produce the text sequentially. Encoding is computationally expensive, while decoding is memory hungry. Triton Inference Server allows us to interleave these steps so the GPU can encode an audio stream while it decodes another, improving GPU utilization. These optimizations, along with several others, allow LAS to accurately transcribe six times as much text without increasing compute resources.

Video Sensemaking

Driving Detection: Enhancing Military Vehicle Recognition with Synthetic Data

From virtual worlds to real-world wins: LAS enables faster, more reliable detection of enemy military vehicles.

Detecting military vehicles in video data is a complex task, often hindered by messy, non-ideal conditions. Acquiring large-scale, diverse training datasets for this purpose is challenging due to high costs, safety and privacy concerns, and the difficulty of capturing rare or hazardous operational scenarios. Synthetic data generation offers a compelling solution, enabling controlled, scalable data creation that simulates conditions often impractical or impossible to replicate in real-world settings.

In collaboration with Fayetteville State University and agency partners, the LAS team harnessed simulators like Digital Combat Simulator (DCS) to quickly generate synthetic datasets of military vehicles under varied environmental conditions. DCS provides high-quality 3D models of worldwide military vehicles, diverse weather patterns, lighting scenarios, and camera parameters, as well as filters such as blur to enhance realism. By leveraging these features, extensive, photorealistic training sets can be created to encompass a wide array of real-world situations.

This innovative pipeline has proven effective in training AI models to reliably detect military vehicles under various environmental conditions. It addresses key challenges, including mitigating object occlusion in cluttered or partially obscured scenes, handling insufficient lighting, and identifying small, low-resolution objects. To maximize the pipeline’s capabilities, various methods were explored to balance real and synthetic data in unified training datasets. This approach not only enhances the scalability of synthetic data while preserving the fidelity of real-world imagery, but also significantly reduces the labeling burden through automatic annotation. By saving time and resources, this strategy enables more precise classification of military vehicles and fortifies the robustness of the AI models.

Looking ahead, synthetic data generation will remain a vital asset for advancing AI capabilities. By expanding the diversity and volume of training datasets and significantly reducing labeling efforts, synthetic data makes AI model development more efficient and cost-effective. This is particularly valuable for military vehicle detection and other complex applications where real-world data collection is challenging. As the field progresses, synthetic data will undoubtedly be a key driver of innovation and AI reliability.

Advancing Video Triage Workflows: A Year of Innovation

The EYEGLASS tool increases search and discovery with custom-trained models.

The past year has seen significant advancements in how analysts triage video data at the edge. Driven by a combination of technological exploitation and close collaboration with key stakeholders, LAS has delivered game-changing capabilities directly to analysts. Whether by reviewing the output of fine-tuned models within their workflows or by leveraging tools like EYEGLASS, analysts can more quickly sort and filter their results into actionable foreign intelligence content.

In partnership with technological experts from across NSA and partnering agencies, LAS has aggregated relevant data and deployed custom, fine-tuned models to support analysts’ ability to triage video content directly at the edge. These models have enabled analysts to uncover foreign intelligence insights more rapidly by providing more accurate and reliable visual content. With an integrated daily feedback loop, the team will be able to deploy regular refinements to the model to improve its triage capability and ensure its output remains relevant. As one of the first to leverage a cross-agency model-sharing agreement, the team worked hand-in-hand with technical experts to ensure these fine-tuned models had maximum utility for all partners.

Through participation in agency working groups and initiatives, LAS is ensuring our research and prototypes meet current and future analyst workflow challenges. Whether through advocacy and code-sharing or active development within agency workshops, LAS researchers are addressing the most pressing needs of analysts and supporting the mission of the NSA.

As the landscape of video intelligence continues to evolve, it is clear that there will be ongoing challenges and opportunities for innovation. The advancements of the past year provide a strong foundation for future progress, and we are likely to see continued improvements in video triage workflows in the years to come. By staying at the forefront of technological innovation and maintaining close collaboration with key stakeholders, it is possible to ensure that video triage workflows remain effective, efficient, and closely aligned with analysts’ needs.

Human-Centered AI

NC State Designers, LAS Enhance Confidence Score Visualizations

Cross-functional innovation brings clarity to the interpretation of intelligence metadata.

LAS transformed confidence score visualizations through a partnership between language analysts, user experience (UX) experts, and developers. This effort addressed a critical pain point for language analysts who currently encounter five different visualization methods across four tools, creating cognitive burden during time-sensitive intelligence work. The project generated three visualization designs that make confidence scores more immediately interpretable, helping analysts efficiently triage audio cuts and assess speakers. Each design underwent usability testing with agency analysts. One visualization was selected for further testing and eventual integration into corporate tools.

The project’s success stems from the lab’s collaborative approach with faculty researchers Helen Armstrong and Matt Peterson from the NC State College of Design. This proximity to academic expertise, alongside partnerships with agency experts in UX, development, and language analysis, enabled a rigorous design process built on direct analyst engagement. The collaborative template fostered iterative co-creation focused on analyst needs.

NSA analysts from across the agency provided support to the project by enabling their language analysts to engage with LAS and NC State partners. Analyst participation in the process, from problem identification to prototype evaluation, shaped design concepts and provided real-world context. LAS’s collaborative approach established trust between operational analysts and academic partners, encouraging feedback and innovative thinking across organizational boundaries.

“This experience offered a unique glimpse into creating the tools we use every day,” noted one senior language analyst who engaged with the NC State designers. “Opportunities like this empower us to contribute to tool development, ensuring they are intuitive and effective.”

NC State College of Design research assistants Kweku Baidoo and Rebecca Planchart brought exceptional talent to the project. Selected for their performance in NC State’s Design Master’s course, they had experience with analyst-focused challenges, making them well-suited to tackle visualization complexities.

By standardizing the confidence score visualization across analyst tools, this initiative reduces the need for mental translation. With design and testing complete, the team seeks to integrate model health before implementation, with final testing planned for late FY 2026.

Boosting Analyst Efficiency with AI-Powered Summaries

AI in action: LAS transforms analytical workflows with smart summaries.

DISTILLEDENTITY (DE) is a tool that provides government analysts with concise, AI-generated summaries of verified reporting, streamlining analyst workflows by addressing a critical gap and rapidly orienting analysts to previously unknown information of interest. By distilling information from existing reports, DE enables analysts to quickly comprehend the significance of unfamiliar data without manually reviewing numerous reports. This capability enhances efficiency, ensuring that analysts have the information they need to focus on high-priority tasks and make more effective decisions.

The DISTILLEDENTITY tool is a prime example of the power of collaboration between the Laboratory for Analytic Sciences and its partners. Conversations in early 2025 between LAS and one of its government partners identified internal analyst workflow inefficiencies, prompting discussions between LAS developers and government analysts that culminated in the development of DE. LAS identified the pain points in the partner’s workflows and leveraged existing capabilities to deliver a timely solution. Other government partners supported LAS and their team in making DE effective and more widely accessible to government analysts. DE is an easy-to-understand example of how AI/ML technology is powering government missions. It stands as a testament to the transformative power of AI in analysis, offering a glimpse into a future where technology and human expertise work seamlessly together to enhance knowledge.

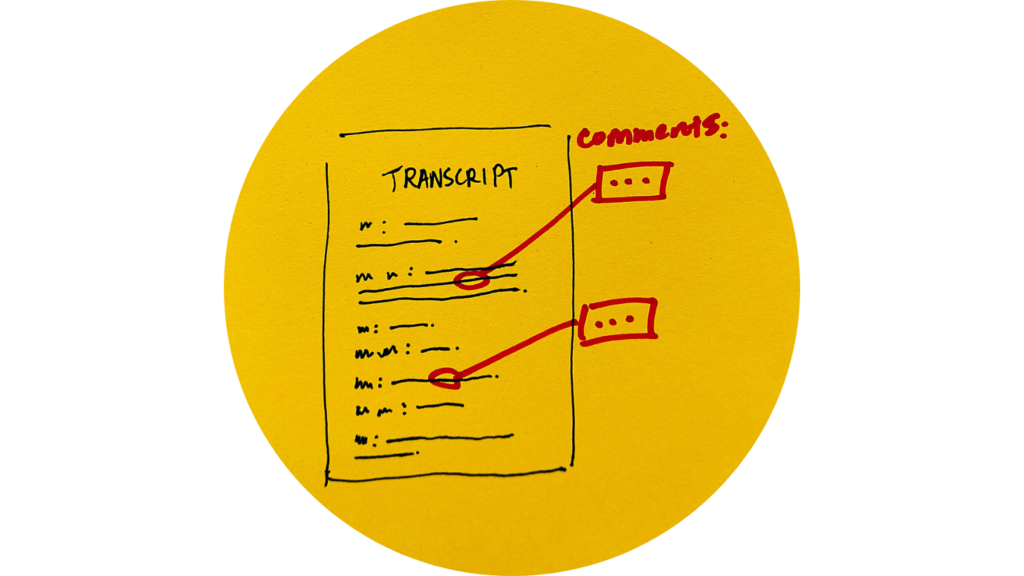

National Security Policy Incorporates LAS Work

The operator comment taxonomy tool developed at LAS provides transcript readers with more context for understanding ambiguous references.

read the full story

Operationalizing AI/ML

AI Benchmarking

The lab is assessing AI performance and risk for mission-critical reliability.

LAS’s benchmarking efforts assess AI performance and risk for mission-critical reliability. With the emergence of ChatGPT in late 2022, the research, development, and adoption of generative AI into everyday life has accelerated. This is also true for the intelligence community (IC). Driven by key partnerships within the IC, LAS has rapidly built a generative AI benchmarking capability to support unclassified AI evaluation of available models. The goal of the LAS AI benchmarking project is to, first, assess the capability of an AI model to perform specific activities and, second, to compare AI models to determine which is more effective.

The generative, non-deterministic nature of AI models has introduced the need for more robust performance and AI risk assessments. This helps to provide confidence as we look to include AI into IC workflows. LAS created a benchmarking taxonomy, measuring across the key areas of AI capability, AI security, AI safety, and AI computation, to give a more holistic assessment of each AI model. This taxonomy incorporates evaluations on how the model performs at a given task, along with, for example, the impacts of its non-deterministic generation (think hallucinations), and the impact on the compute environment that is running the model (cybersecurity). Starting on a low-side infrastructure, LAS has created the capability to store and run AI benchmarks, establishing a code repository to manage and share benchmarks. The lab also hosts key partners to collaborate and build new benchmarks. LAS builds each benchmark using the open-source inspect benchmarking framework, which promotes the sharing of AI benchmarks with partners.

These AI benchmarking goals are driven by customer challenges as LAS and its stakeholders work together to maximize mission impact.

LAS is expanding its model-based AI benchmarking capability to include multi-modal AI models and AI agents for a holistic evaluation of AI systems.

Advancing AI Security Through Scalable Model Scanning and Behavioral Analysis

Dynamic Model Scanner detects threats pre-deployment; Model Monitor Run builds baselines to spot behavioral outliers needing investigation.

In 2025, LAS significantly expanded its AI security capabilities by enhancing its existing model-scanning infrastructure and developing a model-monitor runtime capability to analyze system behavior when executing different machine learning models across tasks and inputs. Together, these systems strengthen the National Security Agency’s ability to assess, validate, and safely operationalize machine learning models. Over the past year, the team successfully executed over 500 model scans and transferred more than 200 validated models into the agency’s secure environment.

Building on the prior year’s foundation, the model scanner matured into a comprehensive, production-scale security pipeline. The system now includes an eight-stage workflow featuring advanced model extraction, multi-format conversion, integrity checks, and parallelized security scanning. Enhanced validators—including ONNX structural verification, Safetensors analysis, JSON validation, and Python static security checks—significantly increased the depth and reliability of detection. Automated EC2 provisioning, a retry system for AWS capacity constraints, and background workers for file transfer, ZIP generation, and PDF reporting contributed to dramatically improved throughput and operational efficiency.

Complementing static analysis, the newly launched model monitor runtime introduced full system-call behavioral tracing for runtime model evaluation. By executing models on standardized inputs within isolated environments, the system captures detailed patterns of file access, process creation, network behavior, and GPU utilization. Support for 30 AI task types across NLP, vision, audio, and multimodal domains enabled broad coverage of modern model architectures. This capability enabled LAS to build behavioral baselines across hundreds of models, enabling anomaly detection based on real-world execution patterns.

Together, these advancements moved LAS from a preliminary model scanning capability to a robust, multi-layered AI security framework. The 2025 improvements substantially increased scale, precision, and automation, ensuring the agency can safely adopt emerging AI technologies with confidence and accountability.

Summer Conference on Applied Data Science

Researchers and developers spent the summer working directly with government analysts to address their most significant pain points.

39 Participants

Government, industry, and academia

39 Project Reports

8 Weeks

Plus high-side integration

Summer Conference on Applied Data Science

Week 9: Integration

Government analysts and developers are applying innovations from SCADS 2025 to advance tradecraft on the high side.

Technical research at the 8-week 2025 Summer Conference on Applied Data Science focused on automatic summarization, recommender systems, and human-computer interaction with a mission to answer these critical analyst questions:

- What can a tailored daily report (TLDR) tell me about how my data has changed since I last saw it?

- What can a TLDR tell me across multiple data sources?

- How can a TLDR help me achieve my analytic goals within my organization’s frame of reference?

Solutions developed by participants at SCADS have enhanced operational capabilities, thanks to government participants applying these unclassified outcomes to real-world needs during week 9 and beyond. Notable achievements include the successful testing of three projects on mission data, with transition plans finalized or in progress. Two participants achieved a reduction in video storage without compromising performance by leveraging video key frame summarization techniques on IC data. Another participant pioneered the testing and implementation of a server compatible with the government compute resources, enabling optimized, seamless model deployments. Lastly, a participant developed a powerful tool to query imagery datasets for objects in the image then identify associated geolocations based on the content of the image. This has been tested on government datasets and is being configured for widespread use.

End-to-end TLDR prototypes continue to be developed on government data, inspiring SCADS participants to rework their code to configure the summarization and recommender system pipeline to work within the IC infrastructure for a variety of use cases. Specifically, advancements on a low-density language-focused TLDR are underway, focusing on adaptations specific to the intelligence community.

SCADS has culminated in four highly successful years, fostering a dynamic ecosystem of innovation, collaboration, and application. These advancements drive improvements to analyst workflows, positioning SCADS at the forefront of applied data science in the intelligence community.

SCADS 2025: Help for the Overburdened Analyst

People

Industry Spotlight: Rockfish Research

Flexibility is key for this small business bringing advanced AI solutions to LAS.

Chris Argenta, the principal AI/ML researcher at Rockfish Research, worked as a 2025 collaborator with the Laboratory for Analytic Sciences (LAS) to develop high-risk and high-value solutions for the intelligence and defense communities in artificial intelligence, advanced analytics, and human/AI interfaces.

In his most recent project with LAS, Argenta and his team developed a system for validating AI responses and visually communicating how responses differ between AI models

Argenta says the opportunity for funding and the ability to work with other industry and government professionals through LAS is important for the growth of his company.

“There’s this built-in flexibility,” Argenta says. “To be able to tailor as you go and move with what’s interesting is exciting.”

Rockfish founder Chris Argenta prototyped OpenTLDR, the central end-to-end framework for tailored daily reports at the Summer Conference on Applied Data Science.

After participating in the LAS Summer Conference on Applied Data Science a couple of years ago, he encouraged his colleague Abigail Browning to apply for the 2025 conference. Browning brought her background in communication, digital media, and user experience to the problem set.

“We’re designing something that the government can use,” says Browning. “[Feedback from] government participants allows us to create what works well, so the likelihood of adoption is higher.”

Meet the Interns

View the PDF Flipbook

View the 36-page impact report as a digital flipbook.

Share your story.

We are looking for stories about the impact of our work for our annual LAS Impact Report. If you have received an award, published an article, developed a product, applied research to real-world challenges, joined a board, or otherwise made an impact through your work with LAS, let us know with this form.